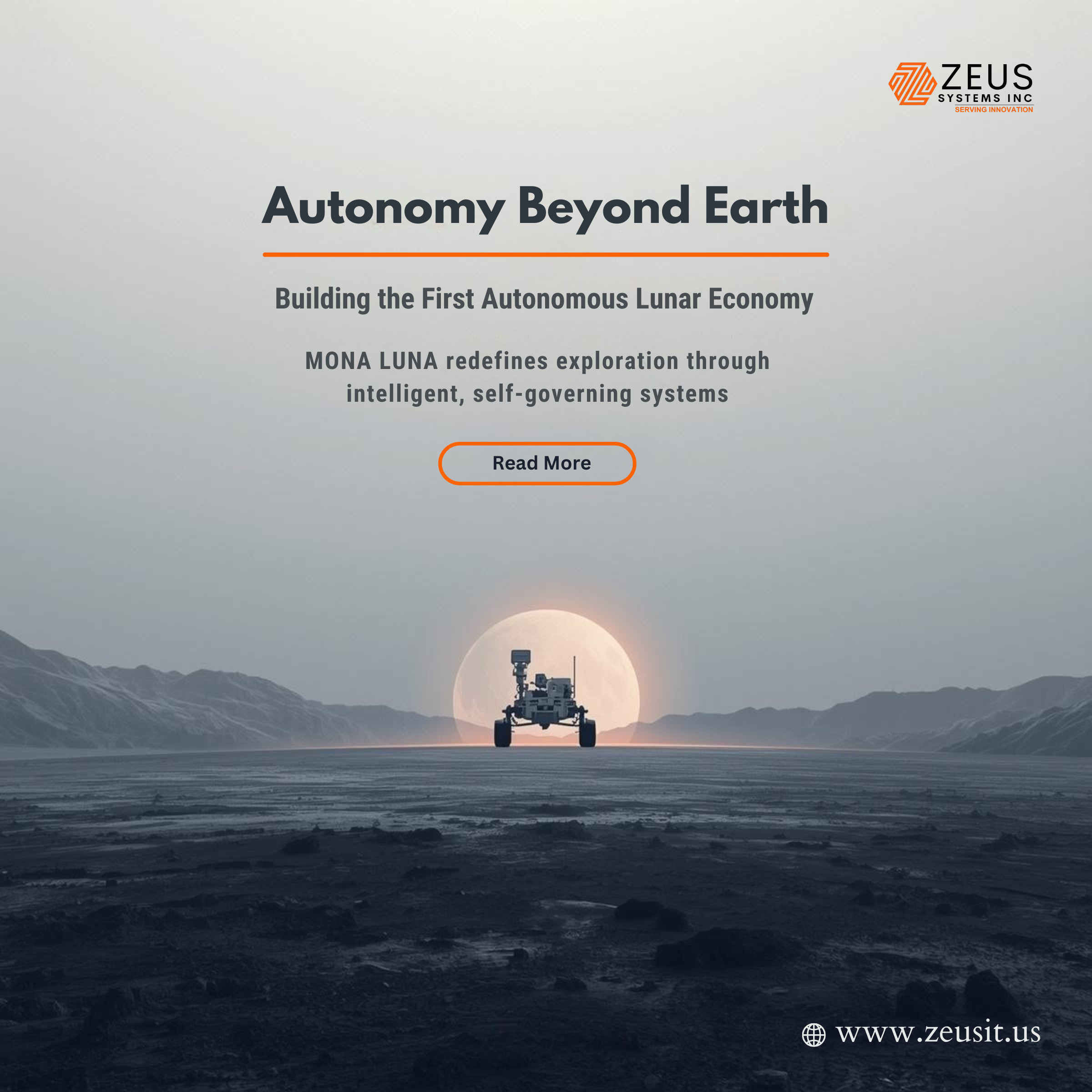

For decades, lunar exploration has been constrained by two fundamental challenges: extreme terrain unpredictability and dependence on human-controlled operations. While missions led by organizations like NASA and ISRO have successfully demonstrated robotic mobility on the Moon, the next leap forward demands something radically different complete autonomy under hostile, unknown conditions.

Enter MONA LUNA a next-generation AI-powered lunar rover system designed not just to explore, but to independently mine, adapt, and build the foundations of permanent off-world habitats without human intervention.

This is not an incremental improvement. It represents a paradigm shift: from remote-controlled machines to self-governing extraterrestrial industrial agents.

The Problem: The Moon Is Not Just Empty It’s Unpredictable

Unlike Earth, the Moon presents a chaotic and unforgiving landscape:

- Jagged regolith with inconsistent density

- Craters with unstable slopes exceeding 30 degrees

- Electrostatic dust that interferes with sensors

- Extreme temperature gradients (-173°C to +127°C)

- Communication delays and blackout zones

Traditional rovers rely heavily on pre-mapped routes and human decision loops, which break down in such environments. Even slight terrain miscalculations can lead to immobilization a fate suffered by multiple historical missions.

MONA LUNA addresses this not by improving mapping but by eliminating the need for certainty altogether.

MONA LUNA: A Self-Evolving Intelligence System

At its core, MONA LUNA is not a rover it is a distributed AI cognition platform embedded within a physical mobility system.

Key Architectural Layers

- Perceptual Layer (LUNA-SENSE)

- Multi-spectral terrain scanning

- Subsurface radar for detecting voids and ice deposits

- Dust-penetrating LiDAR alternatives

- Cognitive Layer (MONA Core AI)

- Real-time terrain reasoning using probabilistic physics models

- Self-learning navigation policies via reinforcement evolution

- Contextual risk assessment (not just obstacle avoidance)

- Execution Layer (Adaptive Mobility System)

- Shape-shifting wheel-leg hybrid actuators

- Dynamic traction redistribution

- Micro-adjustment balancing at millisecond intervals

- Swarm Intelligence Protocol (Optional Multi Rover Mode)

- Collective decision-making without central control

- Resource allocation based on emergent needs

- Failure compensation via peer adaptation

AI Navigation: Beyond Pathfinding

Traditional navigation answers: “How do I get from A to B?”

MONA LUNA instead asks:

“What is the safest, most energy-efficient, and mission-optimal way to exist within this terrain?”

1. Terrain Understanding as a Living Model

Instead of static mapping, MONA LUNA builds a continuously evolving terrain consciousness:

- Each grain interaction updates soil behavior models

- Slopes are not angles they are probabilistic collapse zones

- Shadows are analyzed for temperature traps and energy risks

2. Predictive Failure Simulation

Before taking a step, the AI runs thousands of micro-simulations:

- Wheel sink probability

- Slip vectors under varying torque

- Structural stress under uneven load

This enables preemptive adaptation, not reactive correction.

3. Emotional AI Without Emotion

A groundbreaking concept: MONA LUNA uses synthetic “survival instincts”:

- “Caution bias” increases in unknown zones

- “Exploration drive” rises when resource probability spikes

- “Fatigue modeling” limits risk when energy reserves drop

This mimics biological resilience without human input.

Conquering Uneven Terrain: The Mobility Revolution

MONA LUNA’s hardware is inseparable from its intelligence.

Hybrid Wheel-Leg System

- Wheels morph into clawed structures for steep climbs

- Independent articulation allows movement even if 50% of contact points fail

- Capable of traversing:

- Loose dust plains

- Rocky ejecta fields

- Crater walls

Micro-Adaptive Suspension

Instead of passive suspension:

- Each joint reacts in real time to terrain feedback

- AI redistributes weight dynamically

- Prevents tipping even on shifting surfaces

Self-Recovery Mechanisms

If immobilized:

- The rover reconfigures its geometry

- Uses controlled vibrations to escape regolith traps

- Calls swarm units (if available) for cooperative extraction

Resource Mining: The True Mission

Exploration is no longer the goal resource independence is.

Target Resources

- Water ice (for fuel and life support)

- Helium-3 (future fusion potential)

- Rare earth metals

Autonomous Mining Workflow

- Detection

Subsurface scanning identifies high-probability resource zones - Validation

AI performs micro-drills and analyzes samples in situ - Extraction

- Precision excavation minimizes energy waste

- Dust suppression techniques prevent contamination

- Processing

Onboard refinement into usable forms (e.g., water extraction, oxygen separation) - Storage or Deployment

Materials are either stored or used immediately for infrastructure

Zero-Human Oversight: The Ultimate Leap

The defining feature of MONA LUNA is its ability to operate indefinitely without human control.

How This Is Achieved

- Autonomous Goal Setting

The system redefines mission priorities based on environmental feedback - Self-Healing Software

AI rewrites parts of its own code within safe boundaries - Hardware Redundancy Intelligence

Instead of backup systems, it uses adaptive repurposing

(e.g., converting a failed sensor into a limited-function substitute) - Ethical Constraint Layer

Ensures mission alignment without human intervention

Building Permanent Off-World Habitats

MONA LUNA is not just a miner it is a precursor to extraterrestrial civilization.

Infrastructure Capabilities

- Autonomous construction using regolith-based 3D printing

- Terrain leveling for landing zones

- Subsurface habitat carving for radiation protection

Energy Systems

- Solar field deployment optimized by AI

- Thermal energy storage in lunar regolith

Habitat Preparation

- Oxygen generation from lunar soil

- Water extraction and storage

- Structural integrity testing for human arrival

The Bigger Vision: A Self-Sustaining Lunar Ecosystem

Imagine a network of MONA LUNA units:

- Mining resources continuously

- Building infrastructure autonomously

- Repairing and replicating systems

- Expanding operations without Earth intervention

This transforms the Moon into:

A self-sustaining industrial outpost before humans even arrive.

Challenges and Ethical Considerations

Risks

- AI decision drift over long durations

- Resource over-extraction without oversight

- System-wide failure in swarm logic

Ethical Questions

- Should AI have autonomy in extraterrestrial environments?

- Who owns resources mined without human presence?

- Can self-evolving systems remain aligned with human intent?

These questions will define not just space exploration but the future of intelligence itself.

Conclusion: The Dawn of Autonomous Cosmic Industry

MONA LUNA represents a fundamental shift:

- From exploration exploitation (in the constructive sense)

- From control trust in autonomous intelligence

- From temporary missions permanent presence

If successful, it will mark the moment humanity stopped visiting space and started living and building beyond Earth.